In a microservices architecture such as API-Led connectivity with Mulesoft, one of the key aspects is a solid monitoring mechanism capable of raising alerts in case of API outage.

The Health Check Pattern

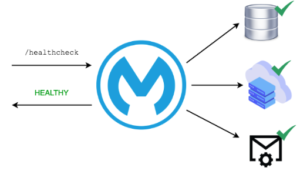

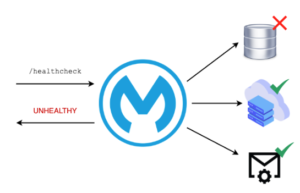

The most common way to achieve this is to implement the Health Check Pattern, which consists of a series of special REST endpoints like /healthcheck or /health that instead of doing business operations, return info on the availability of the involved microservice and its dependencies.

All systems are up and running

DB Failure

In its simplest case, a health check should return only the status of the global network, meaning that if at least one of the systems involved is down, the whole status should be unhealthy. As you can imagine, this is not so helpful in troubleshooting and easily finding the failure point in a complex application network.

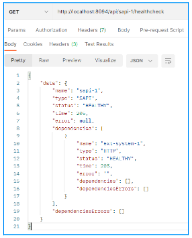

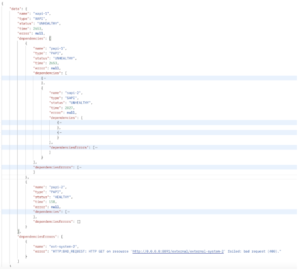

The solution is to return from the health check a nested structured data model that returns a list of detailed info about the status of each component.

{ "name": "api name", "type": "XAPI | PAPI | SAPI| DB| HTTP| JMS| AMQP", "status": "HEALTHY | UNHEALTHY", "time": "response time", "dependencies": [ { "name": "dependency name", "type": "XAPI | PAPI | SAPI| DB| HTTP| JMS| AMQP", "status": "HEALTHY | UNHEALTHY", "time": "response time", "error": "error", "dependencies": [], "dependenciesErrors": [] }, { "name": "dependency name", "type": "XAPI | PAPI | SAPI| DB| HTTP| JMS| AMQP", "status": "HEALTHY | UNHEALTHY", "time": "response time", "dependencies": [], "dependenciesErrors": [] }], "dependenciesErrors": [ { "name": "dependency name", "error": "error" }] }

In this way, not only can we get information about the global status at a glance, but also in case something is broken or does not respond, we can navigate the data model and find which system is experiencing an outage.

Health check endpoints can then be invoked periodically by a scheduler or a monitoring tool in order to have constant updates on the state of our applications network and send alerts in case of an outage.

Sounds like a perfect fits in an API-Led architecture, but what is the best way to create solid and reusable components in Mulesoft?

Yes, build custom connectors through the SDK.

So let me introduce to you Florence Next Health Check Connector!!

The Journey

Let’s start from the beginning, what brought us to build the connector?

The answer is that we wanted a well-tested and reusable solution capable of providing the health check pattern implementation to our customers.

During the past years, we found ourselves re-implementing the health check pattern for every new customer, and soon we realized that we needed a way to make this code reusable.

The first approach was to create a common library and a set of RAML Fragments that can be imported into our API contracts. This was already a big enhancement compared to copy/paste code from app to app but it wasn’t enough.

Libraries have limitations, for example, it was harder for new developers to understand how to use it, and it was almost impossible to create built-in operations for typical use cases.

So here we are, we got our hands dirty with Java code and we built the connector. At the beginning, only a couple of external systems were supported but then we added more and hopefully many more will be added in the future (we want your help with this!!).

Connector Features

Now the juicy part! Let’s Give a look at what’s inside the connector and what are the supported operations.

Operation: Create Health Check Object

This operation will prepare the payload to output the result of the health check and can be used in combination with other operations to aggregate results and prepare the final response.

It can be customized to support specific response standard.

Operation: HTTP

The operation to be used when we want to verify the availability of an external REST service, it can get the global configuration of the Mulesoft HTTP Request Connector and support custom headers, URI params and query params.

Operation: JMS

Checks the availability of a JMS queue service by publishing a message on a configured queue (if it does not exist can be created), and by consuming the same message. It can share the global configuration used by the Mulesoft JMS Connector.

Operation: AMQP

Similar to the JMS operation, it publishes and consumes a message on an AMQP Queue Exchange.

Operation: DB

This operation checks the connectivity to a remote SQL Database by executing a custom SQL statement.

Operation: Internal Services

The Operation to be used to check internal Mule services in an API-Led architecture. It will invoke the health check endpoint on the underlying APIs.

Use Case

Let’s see the connector in action!

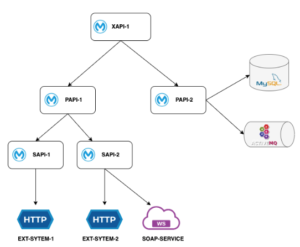

In the following diagram, we have a typical three-layered API-Led Architecture composed of 1 Experience API, 2 Process APIs, and 2 System APIs.

Then, of course we have a bunch of external systems:

- 2 HTTP Services

- 1 SOAP Service

- 1 Active MQ Message broker

- 1 DB MySQL

Now let’s assume that we want to ensure that full end-to-end flow is up and running by calling the health check endpoint at the experience level.

To achieve that, we first need to implement a health check at the lower level and orchestrate it at the upper level.

In the following you will see the health check implementation flow for each component of the given app network.

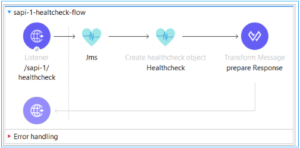

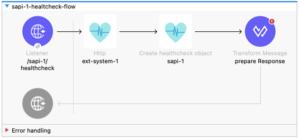

SAPI-1

It’s connected to only one external HTTP REST Service, so the health check implementation is pretty straight forward.

An http operation plus the create object

It return a response like the following:

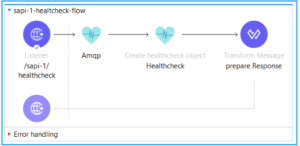

SAPI-2

In this case we need to check that both the REST and the SOAP Services are available, to do so we use the HTTP Operation and the HealthCheck Scope inside a Scatter Gather (in this way we can parallelize) and then the result is aggregated by Create health check object

Response:

Oh no! ext-system-2 is failing 🙁

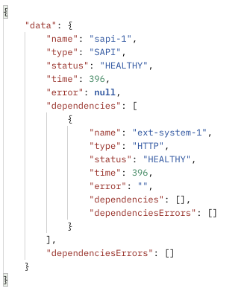

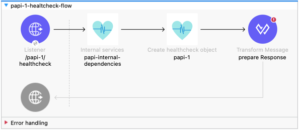

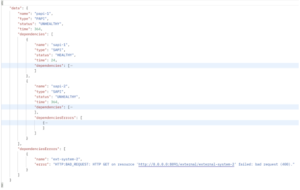

PAPI-1

Moving to the middle layer we can rely on the implementation done on the lower layer, in this case we don’t have any additional external system to check so we can use the internal service operation and aggregate the result

Response:

As you can notice the unhealthy status is bubbling up through the layers and we can easily find which component is broken by checking the dependenciesErrors object.

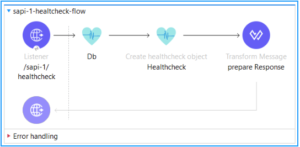

PAPI-2

It is common, especially in asynchronous api implementation to have a persistence layer connected to the process layer of API-Led architecture, in this case we assumed to have a Database and a Message Broker.

Again we can leverage Health Check Connector specific operations.

Response:

XAPI-1

From our experience API we just need to use the Internal services operation to check the underlying apps and we get the full status of the whole applications network!!

Response:

Give it a try!

We really encourage you to give a try to our connector, it is available on GitHub under GPL3 license here:

https://github.com/florencenext/mule-4-healthcheck-connector

You will also find technical documentation on how to install and use the connector.

We will really appreciate your feedback and we are open for suggestions and contributions to improve it.